IBM Bans Siri at the Workplace, Says it’s a Security Risk

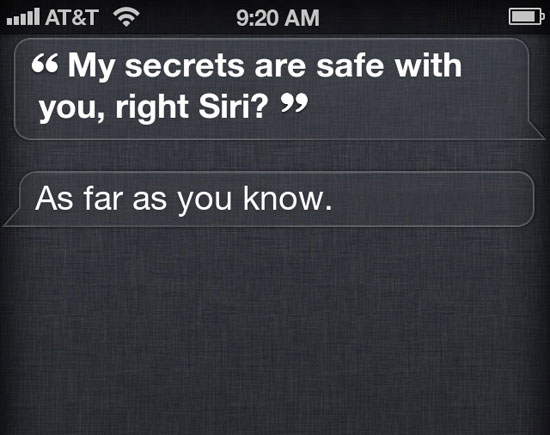

For some, Siri is an invaluable assistant, allowing for easy searching of the web and email dictation. For others, it’s a source of constant entertainment as it bumbles its way through human communication. For IBM, Siri is a security risk. So much so, that they have banned the use of Apple’s voice assistant at the workplace.

In an interview with the MIT Technology Review, IBM’s chief information officer Jeanette Horan said that Siri — along with a host of other potentially secret-leaking services like DropBox — is banned in IBM offices. From the article:

Before an employee’s own device can be used to access IBM networks, the IT department configures it so that its memory can be erased remotely if it is lost or stolen. The IT crew also disables public file-transfer programs like Apple’s iCloud; instead, employees use an IBM-hosted version called MyMobileHub. IBM even turns off Siri, the voice-activated personal assistant, on employees’ iPhones. The company worries that the spoken queries might be stored somewhere.

“We’re just extraordinarily conservative,” Horan says. “It’s the nature of our business.”

The concern for IBM is that everything you say into Siri is stored at an Apple data center. For those unfamiliar, Siri works by recording your query, whisking it away to a data processing center over your data connection, and then returning the results. The heavy lifting of natural language processing is done elsewhere, as well as on your phone.

Apple stores your voice queries at its data center in order to improve Siri’s results. The idea is that all those question and answer sessions we have with Siri could eventually be used to make Siri more clever and responsive. It also sends and stores some personal information — like nicknames and location — but is apparently stored in a semi-anonymous manner.

In fact, as Wired points out, all this is spelled out in the iPhone Software License Agreement — a document no one in their right mind read when purchasing an iPhone. From the agreement:

When you use Siri or Dictation, the things you say will be recorded and sent to Apple in order to convert what you say into text and, for Siri, to also process your requests. Your device will also send Apple other information, such as your first name and nickname; the names, nicknames, and relationship with you (e.g., “my dad”) of your address book contacts; and song names in your collection (collectively, your “User Data”). All of this data is used to help Siri and Dictation understand you better and recognize what you say. It is not linked to other data that Apple may have from your use of other Apple services.

What’s missing in this disclosure is what that information is used for, how long it’s stored for, and whether or not those queries can be traced back to you.

Before you freak out and disable Siri, it’s important to remember that for an everyday person, the information stored by Apple is extremely vague. A series of questions about the weather or telling Siri to search the web for pictures of Eddie Money doesn’t reveal a whole lot about you. However, using Siri’s dictation feature to write private emails could be a smidge disconcerting for some.

Also, Wired points out that the location data might give away key business dealings if it were linked back to the speaker.

While we’ve seen that even disparate information from cellphones can tell you a lot about a person, it relies on being able to tie that information to an individual. While Apple may be storing your Siri queries, those scraps of information may not be organized. Trying to piece them together might be like trying to reconstruct a letter from a bag of shredded documents. Given the flack that Apple took for their location services “tracking” people last year, the company has probably taken great pains to keep what information they have safe and obscure.

While IBM may not trust Siri, I probably won’t be staying up late fretting over whether or not my iPhone is building up a dossier of my silly questions. But then again, I don’t have billion dollar business deals and corporate secrets to worry about.

(Wired, MIT Technology Review, totally fake screenshot via iFakeSiri)

- Google releases the FCC report on its Wi-Fi snooping

- How much does your cellphone know about you?

- Apple says it’s not tracking your iPhone

Have a tip we should know? [email protected]