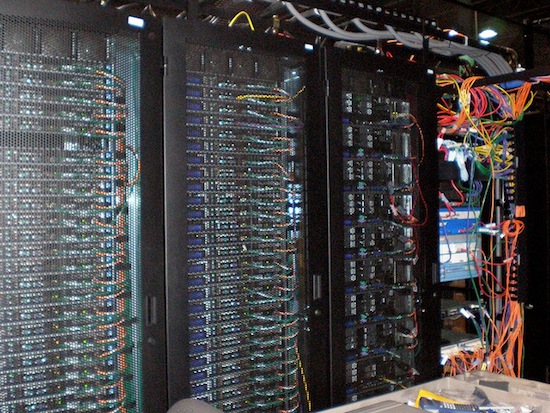

Data Centers Waste Most of the Energy They Consume

Cloud storage is the future, that’s what every tech company, media outlet, and anyone who generally cares about technology has been saying for the last couple of years now. From Netflix to Amazon, from Microsoft to Google, every company is falling over themselves to provide users with access to as much data as they possibly can online. Cloud storage is by far the most convenient way to get access to any type of file you could every want, but that amount of freedom doesn’t come without a cost. According to The New York Times, most data centers waste a whopping 90 percent of the energy they pull from the power grid.

A large part of the problem is that data centers, the place where all those files we may or may not want access to at any given moment, are running at their maximum output all day, every day. While I think it wouldn’t be insane to assume to that there’s at least a few people using Netflix every moment of every day, data centers need to be prepared for millions and millions at any given moment.

According to the Times’ report, there are ways that most data centers could improve their efficiency and reduce their carbon footprint. Unfortunately, it’s very unlikely that any of these operations would implement such measures willingly. Data centers, given that they often store thousands of users’ personal information, are not prone to discussing their systems. Many centers also use proprietary technology in data centers, which they may be unwilling to discuss.

It’s like the old saying; “if it ain’t broke, don’t fix it.” Unfortunately, if don’t fix it we’re going to need searching the universe for new inhabitable planets a whole lot sooner than we thought.

(via The New York Times, Image Credit; Sean Ellis)

- Apparently, some companies are using alternative methods to keep energy costs down.

- As it turns out, internet piracy is also bad environment.

- More information on the five biggest data centers in the US.

Have a tip we should know? [email protected]