A New Way To Solve Linear Equations Over Finite Fields

Prasad Raghavendra, an Assistant Professor at University of California, Berkeley, has found a whole new way of looking at and solving linear equations. Current methods of solving linear equations involve approximating the solution and improving upon it iteratively, but Raghavendra’s approach seems to have eliminated the need for guesswork. Here, have some math.

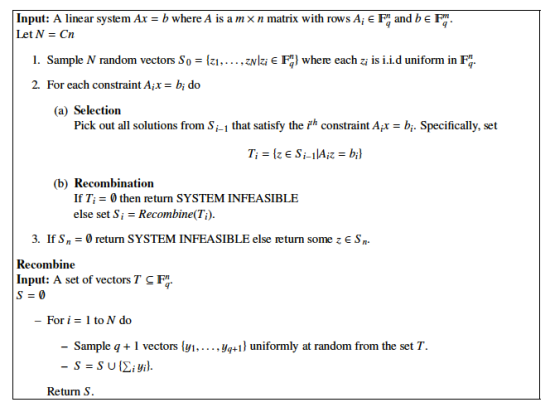

We usually use stuff like the Jacobi Method, where you have to guess the solution before you improve upon it over and over again until you converge upon an answer, or until you’re sufficiently satisfied with its accuracy. Raghavendra’s method instead uses the concept of randomness to narrow down a set of solutions. Initially, it picks a set of random vectors. Then it keeps only those vectors that are solutions to the first equation. It repeats this for the second equation, using randomly chosen linear combinations of the solutions to the first equation, instead of the random vectors. And so on, essentially “pick, remove, combine.”

I suppose picking random vectors is sort of like guessing, but now the number of iterations no longer depends on how accurately you can estimate the solution.

Dick Lipton talked about this in his blog, his analysis far more sophisticated than anything my college-level Linear Algebra can muster. He’s a Professor of Computer Science at Georgia Tech, and had personally talked to Raghavendra on this, so you’re in good hands. Go read his post, and while you’re at it, check out the intensely intelligent discussion in the comments.

Raghavendra has a paper with proof that his method works for finite fields. Here’s the algorithm:

(via Gödel’s Lost Letter and P=NP)

- Accurate mathematical proof in Futurama

- Jamendo did math, wins Chrome Netbook

- This is why you don’t stop learning math

Have a tip we should know? [email protected]