German Researchers are Building Robots That Can Feel Pain

Technology keeps coming up with ways to mimic the world of Hitchhiker’s Guide to the Galaxy lately, what with the recent rise in universal translators, and now researchers designing a robot that can feel pain. Admittedly, this robot doesn’t exhibit any signs of depression like Hitchhiker’s Marvin did, but it still mimics a variety of pain responses.

But why? Why would anyone want robots to feel pain? Not feeling any pain is supposed to be, like, the main perk of being a robot!

The pair of researchers have a pretty good answer to that question, believe it or not. Robots should be able to feel pain for the exact same reason that humans can: a pain response indicates an unsafe scenario, and it’s a sign that you should get out of there. Johannes Kuehn, one of the researchers, put it this way: “Pain is a system that protects us. When we evade from the source of pain, it helps us not get hurt.” Kuehn and his co-researcher, Professor Sami Haddadin, wanted to create an artificial nervous system for robots in order to help them recognize when to escape.

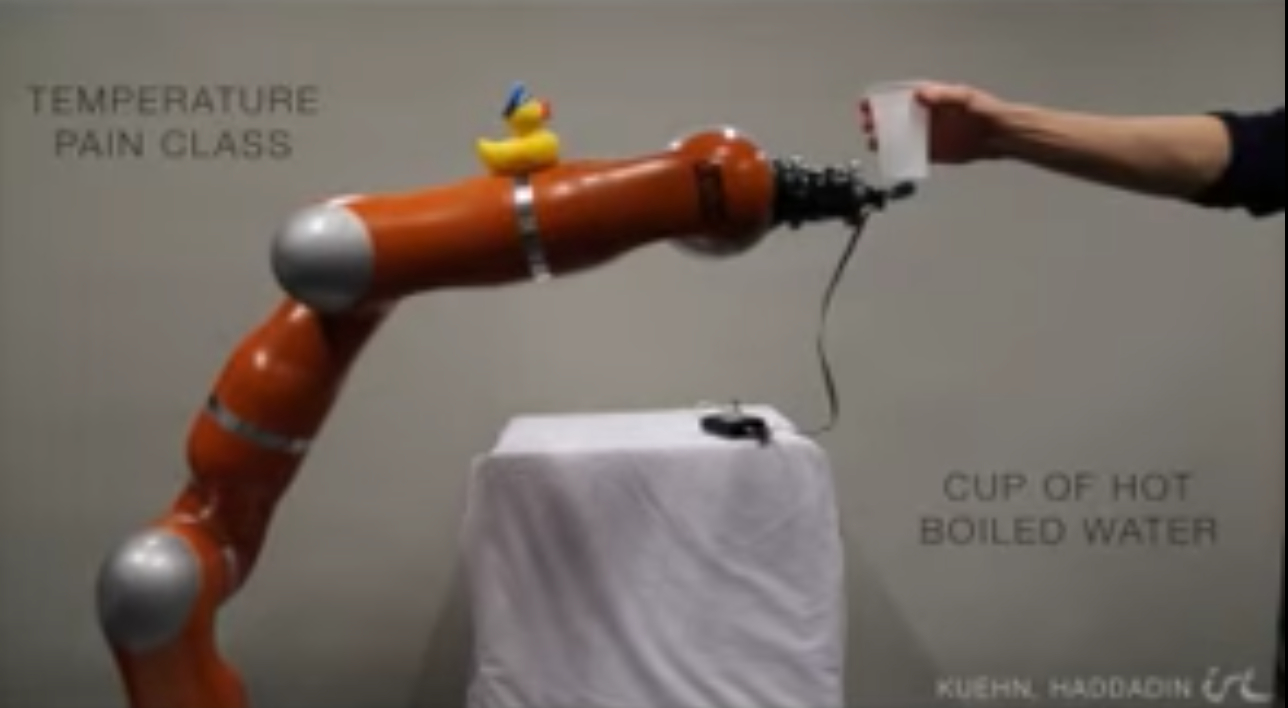

As the video above demonstrates, this robot will jerk away from getting touched with a cup that contains boiling hot water, just like a person would. High heat wouldn’t necessarily have the same effect on a robot that it would on a human, but it’s still potentially damaging and so it’s important for a robot to avoid it. The robot in the video also responds to touch by moving away, and will move further and further away depending on how firm the touch is. I recoil from unwanted touching, too, so this robot seems to have a real handle on self-interest!

I’ve written many times here about the idea of creating consent-based programming for robots, and what that might look like. I think this is a step in the right direction on that score. In this case, the development of pain response for robots has been designed with industrial worker robots in mind. These robots should be able to identify if something is happening that makes them feel “uncomfortable” (that is the researchers’ endearing choice of word), such as equipment going awry and bumping into them. If that happens, they’ll move away.

I guess some people who are afraid of robots will find it terrifying that we would be giving robots any means to assert themselves, no matter how small. However, in this case, we’re giving robots a chance to protect themselves, and that seems like a design ethos that I can get behind, particularly when you consider its scalability. If we want robots to be our companions, and it sure seems like we do, then we have to give them some ability to choose whether or not they want that. In this case, that means making sure that a robot can recognize a safe work environment versus an unsafe one. On a grander scale, that could mean a future robot butler would have the ability to quit their job if they didn’t like their human boss. That seems fair to me!

(via Engadget, image via Tumblr)

—The Mary Sue has a strict comment policy that forbids, but is not limited to, personal insults toward anyone, hate speech, and trolling.—

Follow The Mary Sue on Twitter, Facebook, Tumblr, Pinterest, & Google+.

Have a tip we should know? [email protected]