Microsoft Announces New 3D Facial Modeling System That Is The Most Advanced Yet

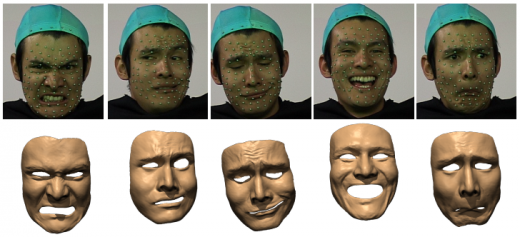

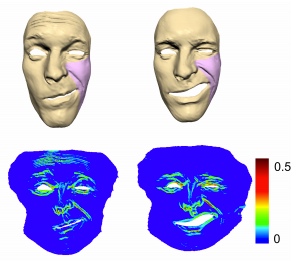

It is easy to notice that renditions of the human form in computer generated images are becoming more and more realistic. Even so, researchers at Microsoft have taken 3D modeling further than ever before by combining motion capture with 3D scanning technology to create high-fidelity 3D images of the human face. These images show the typical features and expressions we make, but are so detailed that they also include the movement of the skin (like wrinkling) as these expressions are made.

The research, led by Xin Tong of Microsoft Research Asia, began by recording 3D facial movements made by an actor. These movements were recorded with a marker-based motion capture system and the data was analyzed through a facial recognition program. The analysis determined what face scans were needed to represent certain facial features, and how many scans would be needed. A laser scanner was then used to create high-fidelity scans, and the motion capture data was combined with the face scans.

According to Tong, the ability to realistically animate the human face is the “holy grail” of computer graphics because the face is so complex. There are 52 facial muscles responsible for moving the face into an amazing array of expressions. The technology that is currently in widespread use doesn’t come close to capturing all of these expressions.

In a The Next Web article, Tong says:

“We are very familiar with facial expressions, but also very sensitive in seeing any type of errors. That means we need to capture facial expressions with a high level of detail and also capture very subtle facial details with high temporal resolution.”

The researchers anticipate that the new technology could be incorporated into Avatar Kinect, which was also developed by Microsoft Research. Tong says:

“The character would be virtual, but the expressions real. For teleconference applications, that could be very useful, for example, in a business meeting, where people are very sensitive to expressions and use them to know what people are thinking.”

Even though the research is seemingly very useful and has an existing platform it could be used on, the researchers say there is still work to be done before the technology hits the mainstream. According to Tong, they would like the technology to be able to capture eye and lip movements, which it currently does not. The researchers would also like to speed up the process so that it takes less time and computing power, ultimately getting to the point where it would work in real-time.

The research was presented by Tong and colleagues at the SIGGRAPH 2011 computer graphics conference in Vancouver, British Columbia.

(Geekwire via The Next Web)

Have a tip we should know? [email protected]