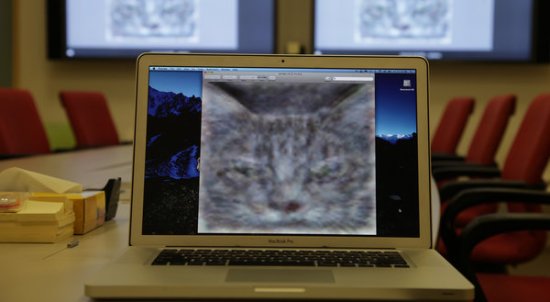

Google Brain Simulation Is Shown The Internet, Can Now Identify Cats

A team of Google scientists fed their 16,000 core brain simulation 10 million images from the Internet and told it to do whatever. So what did it do? It learned what cats look like. That picture up there is its mental picture of a cat, which appears to be a faintly annoyed tabby. If there was ever a time when the Internet actually teaches us something, this is one of those times.

For one, we now know that YouTube is really full of cat videos. If all 10 million of the images were sourced from Lolcats, this result wouldn’t be surprising, but they were not. The machine received a dataset of 10 million 200×200 pixel images that were each thumbnails from a randomly selected YouTube video. Good job, people. The largest neural network built for machine learning has just, thanks to you, learned how to look for cats.

Also, we now know what the Internet does when no one’s looking. What makes this particular brain simulation special is that its learning is unsupervised. The data it processed was never labeled by humans to help it distinguish specific features. Everything it learned, it learned by itself. No one told it what cats were supposed to look like – it reinvented the cat. So now we know, when left to our own devices, the Internet looks at cats (admittedly, the images were sourced from YouTube, and not certain other places).

And finally, we now know the only thing holding back the machines. The achievements of this particular neural network were largely thanks to its sheer processing power. 16,000 cores were used to process and interpret the data, and even then, the researchers note that the human brain has a million times more neurons and synapses than this simulation. David A. Bader, executive director of high-performance computing at the Georgia Tech College of Computing thinks developments in computing technology will take care of the problem: “The scale of modeling the full human visual cortex may be within reach before the end of the decade.”

The researchers have high hopes for this piece of technology, chiefly in image search, speech recognition and machine language translation. However, Dr. Andrew Y. Ng, who led the research team, seems particularly cautious: “It’d be fantastic if it turns out that all we need to do is take current algorithms and run them bigger, but my gut feeling is that we still don’t quite have the right algorithm yet.” Best of luck in finding that algorithm, Dr. Ng. Meanwhile, the dogs of the Internet will rally their forces.

(via The New York Times)

- Google also trained itself to beatbox

- A computer deciphers an ancient language

- Algorithm that detects sarcasm

Have a tip we should know? [email protected]