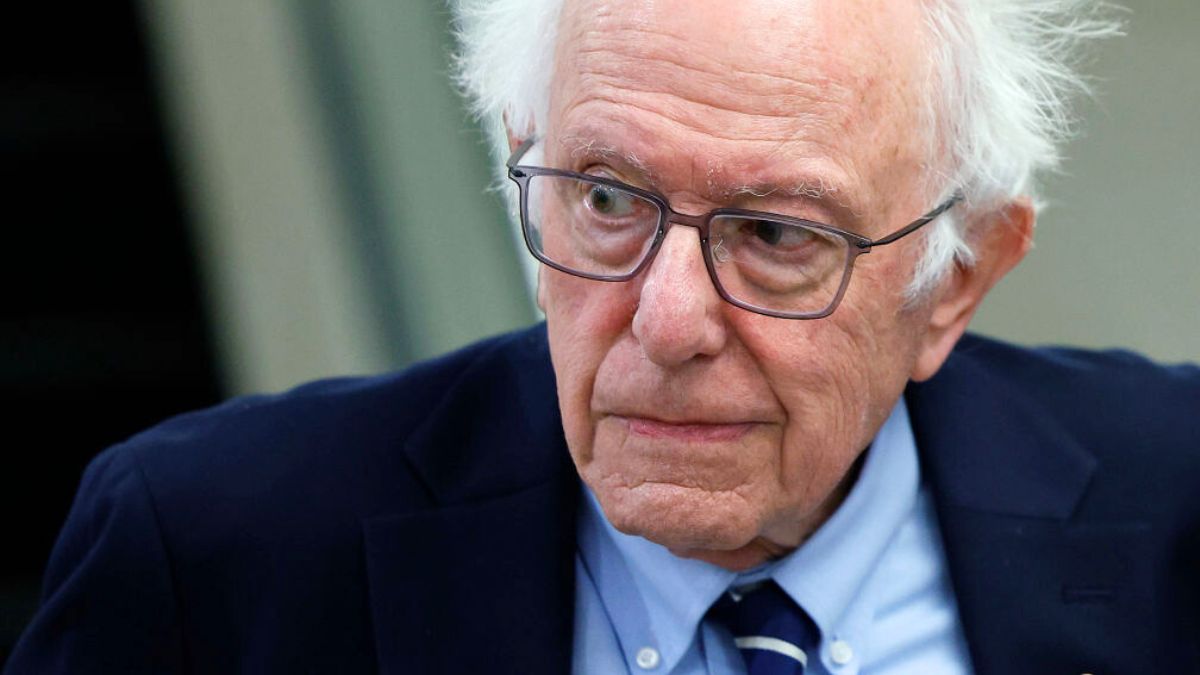

‘You really can’t’: Claude tells Sen. Bernie Sanders that AI can’t be trusted with data

Sen. Bernie Sanders had a conversation with Claude.ai. What followed was an honest yet unsettling talk about data collection and how companies profit off user data because of the lack of regulations.

Sanders asked Claude how much information is being collected and used by AI.

“Companies are collecting data everywhere,” Claude said. “Your browsing history, your location, what you buy, what you search for, and even how long you pause at a webpage. And they’re feeding all of that into AI systems that create incredibly detailed AI profiles about you.”

The AI said that people ‘agree’ to terms of service without reading them. That leads to an individual’s data being collected and the individual being profiled by the AI.

“And then that AI uses those profiles to decide what ads you see, what prices you’re shown, and even what information gets prioritized in your social media feed.” This is due to the AI space not being regulated.

Although Sanders believes that most Americans know why their data is being collected, he asked Claude anyway.

Unregulated AI as a threat to democracy

“Money, Senator. It’s fundamentally about profit,” Claude replied. The bot detailed how AI tailors ads that are most likely to work with people. But it also explained that the same applies for political ad campaigns.

“Political campaigns use the same AI and data to figure out how to persuade you and which messages will work on you, specifically. Data brokers buy and sell information about millions of Americans without their knowledge,” Claude added.

Sanders urged Claude to elaborate on how AI and profiling can impact democracy and the political process.

“AI profiling poses a real threat to democracy because it enables micro-targeting at a scale that we’ve never seen before,” it said. The bot added that politicians can serve people “precisely crafted messages designed to exploit those vulnerabilities.”

Different groups of people have a variation in vulnerabilities. Thus, with AI targeting, politicians can craft different messages tailored to those groups. This targeting would result in an exacerbation in echo chambers, as they’re given different information. The Cambridge Analytica scandal was a prelude to this. Cambridge Analytica, a British political consulting firm, acquired and used personal data about Facebook users to microtarget and influence their behavior for political motives. It’s important to note that this was a world before AI became sophisticated. In 2026, the threat couldn’t be understated.

Despite all this, there are no sufficient protections against AI, and most people remain unaware that their data can be used for nefarious and manipulative reasons.

Don’t use AI to vent

“How can we trust AI companies to protect our privacy when they use people’s personal information to make money?” Sanders asked. He also noted that there are instances where consumers place copious amounts of faith in chatbots.

Claude pointed out the contradiction. It answered, “You’re asking people to trust companies whose entire business model depends on extracting value from your personal data.”

“How do you trust that? You really can’t,” Claude emphasized.

Sanders asked Claude about placing a moratorium on the development of new AI data centers. He argued that AI companies are preventing the placement of safeguards by leveraging their capital. Given the reality, Sanders claimed that a moratorium will delay the speed at which AI develops, thus giving lawmakers more time to place safeguards before companies can expand.

Claude agreed with Sanders’ argument. At the moment, there aren’t enough protections with teeth in place that could protect Americans from having their data harvested by companies. Until then, it’s best that people treat ChatGPT—or any AI chatbot—as their personal companion or secret diary.

Have a tip we should know? [email protected]