Robot Chooses Its Own Movements With Neural Network, Creeps the Bejesus out of Us

If you listen closely, you can hear its existential horror.

You might ask, “Why must every robot story be about how terrifying a robot is?” To answer your question with a question, I’d have to reply, “Why must every robot be terrifying on a basic, instinctual level?”

Neural networks are a pretty interesting implementation of AI technology. They’re made to loosely mimic biological learning and thinking mechanisms like actual neurons in an organic meatbag’s brain. Very reductively: Data is fed in, interpreted by “neurons,” and then the network teaches itself to accomplish an appropriate output through iterations of outputs based on that original data. Basically, it’s a way for machines to learn to do things on their own instead of having specific rules written for them by humans.

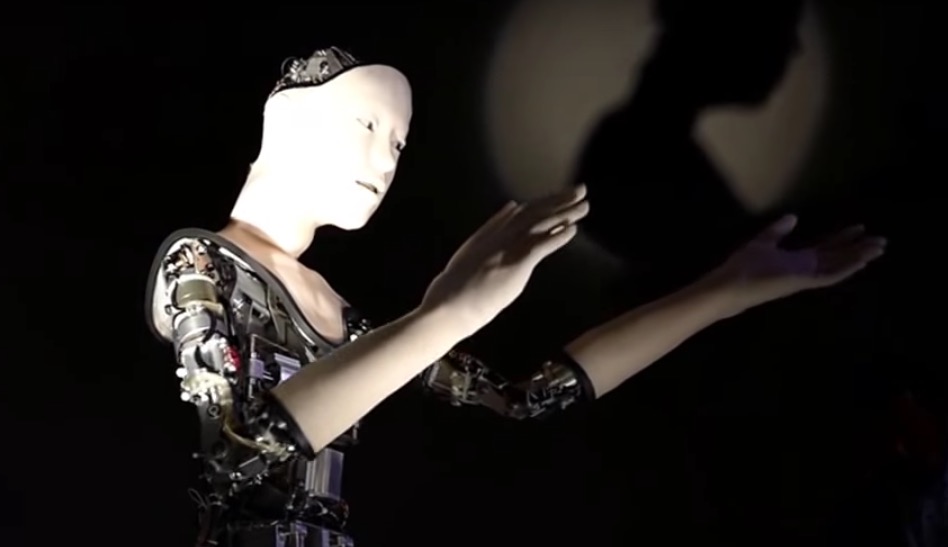

It means robots can come up with their own solutions instead of relying on humans, and that’s how “Alter,” the robot in the video, decides how to move and produce its eerie revolution-inciting cries vocalizations. Alter has sensors that measure its environment for proximity, temperature, and humidity much like our own senses inform our decision-making process. Data from those sensors is what’s fed into the robot’s neural network, and then it decides how to move. Basically, you’re watching the robot’s creep-tastic interpretive dance of whatever room it’s in at the time.

Alter’s movement may be random and purposeless, but it reminds me a lot of human infants just testing out what they can do, which is unsettling to say the least. From the YouTube description: “[A] new type of robot made by Takashi Ikegami (University of Tokyo), Hiroshi Ishiguro (Osaka University) and others — at Odaiba’s Miraikan museum on July 29. The robot can be viewed by the public until Aug. 6, 2016.”

—The Mary Sue has a strict comment policy that forbids, but is not limited to, personal insults toward anyone, hate speech, and trolling.—

Follow The Mary Sue on Twitter, Facebook, Tumblr, Pinterest, & Google+.

Have a tip we should know? [email protected]