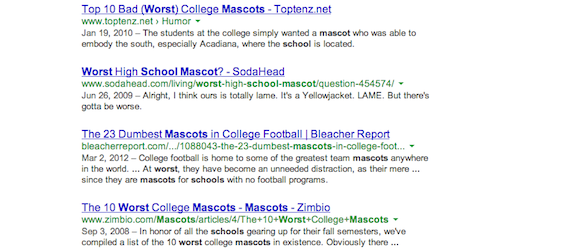

This looks an awful lot like the Google search results for “worst school mascots” doesn’t it? Actually, it’s a search I just made (in an incognito tab, because that’s where any blogger worth her salt does their investigatory Google fiddling) that does not include the word “worst” at all. Turns out, enough people out there still casually use “gay” as a blanket pejorative term for pretty much any kind of thing that Google’s algorithm also thinks that it’s a pejorative term. And Google doesn’t seem inclined to do anything about it.

After Buzzfeed pointed out this particular phenomenon of Google’s search results algorithm earlier this week, Google’s official response was this:

Google’s results, including when a search term is synonymized with another, are a reflection of content on the web and how people search. These results are determined by algorithms and we don’t manually correct this process, but we are always looking at how we can improve our algorithms.

I respect that Google works with some pretty significant systems of code, ones that I probably couldn’t hope to understand without years of study (somehow, I don’t think my hazy memories of Python 101 are going to get me very far). And as a company, it’s done some great work on the LGBTQ rights front. But Google has run into issues of language in its online products before. For example, when Google+ launched, the only part of your profile that could not be switched to private was your gender, and the only choices were male, female, and other. This was a problem if you didn’t identify as any of those labels, and also for a lot of women who didn’t want to advertise their gender for fear of judgement from a male dominated userbase. Quizzical users have also called into question the specific words that Google has disallowed from their instant search results. Nobody wants explicit material popping up on their screen because their search term shares a bunch of initial letters with the name of a porn movie or something. But shortly after instant search debuted, it was discovered that in addition to explicit words, Google also censored “lesbian” and “bisexual” even though a search for those terms doesn’t show porn on the first page.

Shortly after concerns were raised about G+’s gender identification problems, the company allowed users to switch their gender to private. It seemed the search giant might have allowed “bisexual” back into the light as well, last year, but it still displays a blank page. The fact is: Google can manually moderate its algorithms. Instant search and autofill won’t display anything that begins with “I hate “, and many searches about suicide methods instead serve up links for suicide hotlines. I think the company should really think about extending such manually curated search results to uncoupling “gay” from it’s all to common use as a general negative term. Ideally Google should be inferring the subtleties in our word choices in order to deliver better results, but I think this is one case where they shouldn’t.